About

BodyMouth is a virtual instrument that produces body position-reactive speech in real-time. Precise position and orientation data streamed from a series of VR body trackers worn by one or more performers is mapped to a series of adjustable speech parameters such as tongue position, air flow intensity and vocal cord tension. Using a precise series of motions, performers can produce a variety of multi-syllabic words with nuanced timing, inflection and pronunciation.

The bespoke instrument was created for transmedia playwright Kat Mustatea’s experimental theater work, Ielele, which engages with Romanian folklore in the depiction of mythological creatures with augmented bodies and voices. More information regarding the creative endeavors of the work can be found on Kat’s website.

Sound Processing

At the core of BodyMouth is a custom-made polyphonic refactorization of Pink Trombone, an incredible open-source parametric voice synthesizer created by Neil Thapen as a speech therapy tool. This tool has incredible potential for use in live performance, due to the simplicity of the user interaction and its real-time capabilities. To make it polyphonic, it had to be refactored using new objects from the Web Audio API, which significantly reduced the processing demands of the software and allowed for multiple voice-processing modules to run simultaneously.

Interfacing with the body trackers, which aren’t designed for use outside a VR context, requires the use of a custom Python script that streams position and orientation data per tracker to the voice processor software. When the software receives the updated data, it performs a series of calculations on the values to determine information such as the consonant being spoken, at what point along the pronunciation of the consonant the body position is, and whether the letter is currently at the beginning or end of a syllable, which affects the pronunciation of certain letters. Once these values are determined, they are used to index a massive JSON file that contains speech synthesizer values per frame for each consonant. These values are fed directly into the synthesizer to be “spoken”, and the process repeats with a new set of tracker values, just a few milliseconds later.

Scene Editor

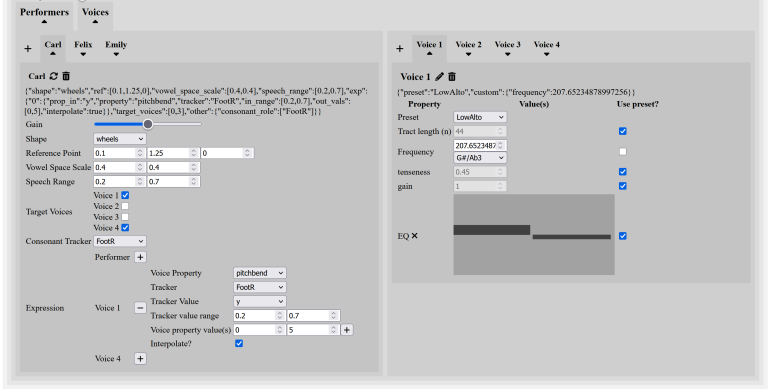

A dynamic front-end UI built in React allows scenes to be created and edited as needed. Each scene can contain any number (subject to computer hardware limitations) of performers and software voices. By creating sequences of scenes, entire performances can be created and edited with relative ease.

Performer settings can be customized per scene to adjust their position on stage and the size of the motions they must perform. Performers can control any number of software voices, allowing one person to speak through an entire chorus of synthesized voices. Each controlled voice introduces additional optional “expression” settings to the performer, such as bending the pitch up or down or panning it left or right. These expressive parameters can be bound to a specific tracker on the performer’s body and create additional aesthetic and expressive possibilities.

Software voices contain their own series of highly customizable parameters, including volume, fundamental frequency, voice “tenseness”, the length of the simulated vocal tract and a simple 2-band equalizer. By adjusting these parameters, a wide variety of voice types can be created, between a lofty soprano and a deep Tuvan throat singer, between a soft whisper to a loud strained shouting.